Speedometer: Difference between revisions

en>Fishnet37222 Fixing vandalism |

en>Monkbot |

||

| Line 1: | Line 1: | ||

{{Machine learning bar}} | |||

{{about|decision trees in machine learning|the use of the term in decision analysis|Decision tree}} | |||

'''Decision tree learning''' uses a [[decision tree]] as a [[Predictive modelling|predictive model]] which maps observations about an item to conclusions about the item's target value. It is one of the predictive modelling approaches used in [[statistics]], [[data mining]] and [[machine learning]]. More descriptive names for such tree models are '''classification trees''' or '''regression trees'''. In these tree structures, [[leaf node|leaves]] represent class labels and branches represent [[Logical conjunction|conjunction]]s of features that lead to those class labels. | |||

In decision analysis, a decision tree can be used to visually and explicitly represent decisions and [[decision making]]. In [[data mining]], a decision tree describes data but not decisions; rather the resulting classification tree can be an input for [[decision making]]. This page deals with decision trees in [[data mining]]. | |||

==General== | |||

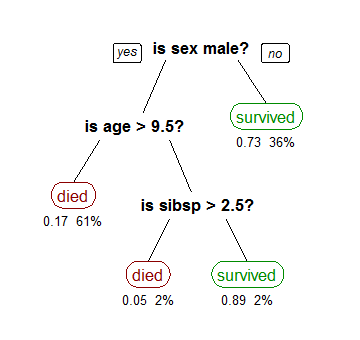

[[File:CART tree titanic survivors.png|frame|right|A tree showing survival of passengers on the [[Titanic]] ("sibsp" is the number of spouses or siblings aboard). The figures under the leaves show the probability of survival and the percentage of observations in the leaf.]] | |||

Decision tree learning is a method commonly used in data mining.<ref name="tdidt">{{Cite book | |||

|last=Rokach | |||

|first=Lior | |||

|coauthors=Maimon, O. | |||

|title=Data mining with decision trees: theory and applications | |||

|year=2008 | |||

|publisher=World Scientific Pub Co Inc | |||

|isbn=978-9812771711 | |||

|postscript=. | |||

}}</ref> The goal is to create a model that predicts the value of a target variable based on several input variables. An example is shown on the right. Each [[interior node]] corresponds to one of the input variables; there are edges to children for each of the possible values of that input variable. Each leaf represents a value of the target variable given the values of the input variables represented by the path from the root to the leaf. | |||

A decision tree is a simple representation for classifying examples. Decision tree learning is one of the most successful techniques for supervised classification learning. For this section, assume that all of the features have finite discrete domains, and there is a single target feature called the classification. Each element of the domain of the classification is called a class. | |||

A decision tree or a classification tree is a tree in which each internal (non-leaf) node is labeled with an input feature. The arcs coming from a node labeled with a feature are labeled with each of the possible values of the feature. Each leaf of the tree is labeled with a class or a probability distribution over the classes. | |||

A tree can be "learned" by splitting the source [[Set (mathematics)|set]] into subsets based on an attribute value test. This process is repeated on each derived subset in a recursive manner called [[recursive partitioning]]. The [[recursion]] is completed when the subset at a node has all the same value of the target variable, or when splitting no longer adds value to the predictions. This process of ''top-down induction of decision trees'' (TDIDT) <ref name="Quinlan86">Quinlan, J. R., (1986). Induction of Decision Trees. Machine Learning 1: 81-106, Kluwer Academic Publishers</ref> is an example of a [[greedy algorithm]], and it is by far the most common strategy for learning decision trees from data. | |||

In [[data mining]], decision trees can be described also as the combination of mathematical and computational techniques to aid the description, categorisation and generalisation of a given set of data. | |||

Data comes in records of the form: | |||

:<math>(\textbf{x},Y) = (x_1, x_2, x_3, ..., x_k, Y)</math> | |||

The dependent variable, Y, is the target variable that we are trying to understand, classify or generalize. The vector '''x''' is composed of the input variables, x<sub>1</sub>, x<sub>2</sub>, x<sub>3</sub> etc., that are used for that task. | |||

==Types== | |||

Decision trees used in [[data mining]] are of two main types: | |||

*'''Classification tree''' analysis is when the predicted outcome is the class to which the data belongs. | |||

*'''Regression tree''' analysis is when the predicted outcome can be considered a real number (e.g. the price of a house, or a patient’s length of stay in a hospital). | |||

The term '''Classification And Regression Tree (CART)''' analysis is an [[umbrella term]] used to refer to both of the above procedures, first introduced by [[Leo Breiman|Breiman]] et al.<ref name="bfos">{{Cite book | |||

|last=Breiman | |||

|first=Leo | |||

|coauthors=Friedman, J. H., Olshen, R. A., & Stone, C. J. | |||

|title=Classification and regression trees | |||

|year=1984 | |||

|publisher=Wadsworth & Brooks/Cole Advanced Books & Software | |||

|location=Monterey, CA | |||

|isbn=978-0-412-04841-8 | |||

|postscript=. | |||

}}</ref> Trees used for regression and trees used for classification have some similarities - but also some differences, such as the procedure used to determine where to split.<ref name="bfos"/> | |||

Some techniques, often called ''ensemble'' methods, construct more than one decision tree: | |||

*'''[[Bootstrap aggregating|Bagging]]''' decision trees, an early ensemble method, builds multiple decision trees by repeatedly resampling training data with replacement, and voting the trees for a consensus prediction.<ref>Breiman, L. (1996). Bagging Predictors. "Machine Learning, 24": pp. 123-140.</ref> | |||

*A '''[[Random forest|Random Forest]]''' classifier uses a number of decision trees, in order to improve the classification rate. | |||

*'''[[Gradient boosted trees|Boosted Trees]]''' can be used for regression-type and classification-type problems.<ref>Friedman, J. H. (1999). ''Stochastic gradient boosting.'' Stanford University.</ref><ref>Hastie, T., Tibshirani, R., Friedman, J. H. (2001). ''The elements of statistical learning : Data mining, inference, and prediction.'' New York: Springer Verlag.</ref> | |||

*'''[[Rotation forest]]''' - in which every decision tree is trained by first applying [[principal component analysis]] (PCA) on a random subset of the input features.<ref>Rodriguez, J.J. and Kuncheva, L.I. and Alonso, C.J. (2006), Rotation forest: A new classifier ensemble method, IEEE Transactions on Pattern Analysis and Machine Intelligence, 28(10):1619-1630.</ref> | |||

'''Decision tree learning''' is the construction of a decision tree from class-labeled training tuples. A decision tree is a flow-chart-like structure, where each internal (non-leaf) node denotes a test on an attribute, each branch represents the outcome of a test, and each leaf (or terminal) node holds a class label. The topmost node in a tree is the root node. | |||

There are many specific decision-tree algorithms. Notable ones include: | |||

* [[ID3 algorithm|ID3]] (Iterative Dichotomiser 3) | |||

* [[C4.5 algorithm|C4.5]] (successor of ID3) | |||

* [[Predictive_analytics#Classification_and_regression_trees|CART]] (Classification And Regression Tree) | |||

* [[CHAID]] (CHi-squared Automatic Interaction Detector). Performs multi-level splits when computing classification trees.<ref>{{Cite journal | doi = 10.2307/2986296 | last1 = Kass | first1 = G. V. | year = 1980 | title = An exploratory technique for investigating large quantities of categorical data | jstor = 2986296| journal = Applied Statistics | volume = 29 | issue = 2| pages = 119–127 }}</ref> | |||

* [[Multivariate adaptive regression splines|MARS]]: extends decision trees to better handle numerical data. | |||

ID3 and CART were invented independently at around same time (b/w 1970-80), yet follow a similar approach for learning decision tree from training tuples. | |||

==Formulae== | |||

Algorithms for constructing decision trees usually work top-down, by choosing a variable at each step that best splits the set of items.<ref>{{cite journal |last1=Rokach |first1=L. |last2=Maimon |first2=O. |title=Top-down induction of decision trees classifiers-a survey |journal=IEEE Transactions on Systems, Man, and Cybernetics, Part C |volume=35 |pages=476–487 |year=2005 |doi=10.1109/TSMCC.2004.843247 |issue=4}}</ref> Different algorithms use different metrics for measuring "best". These generally measure the homogeneity of the target variable within the subsets. Some examples are given below. These metrics are applied to each candidate subset, and the resulting values are combined (e.g., averaged) to provide a measure of the quality of the split. | |||

===Gini impurity=== | |||

{{main|Gini coefficient}} | |||

Used by the CART (classification and regression tree) algorithm, Gini impurity is a measure of how often a randomly chosen element from the set would be incorrectly labeled if it were randomly labeled according to the distribution of labels in the subset. Gini impurity can be computed by summing the probability of each item being chosen times the probability of a mistake in categorizing that item. It reaches its minimum (zero) when all cases in the node fall into a single target category. | |||

To compute Gini impurity for a set of items, suppose i takes on values in {1, 2, ..., m}, and let f<sub>i</sub> be the fraction of items labeled with value i in the set. | |||

<math>I_{G}(f) = \sum_{i=1}^{m} f_i (1-f_i) = \sum_{i=1}^{m} (f_i - {f_i}^2) = \sum_{i=1}^m f_i - \sum_{i=1}^{m} {f_i}^2 = 1 - \sum^{m}_{i=1} {f_i}^{2}</math> | |||

===Information gain=== | |||

{{main|Information gain in decision trees}} | |||

Used by the [[ID3 algorithm|ID3]], [[C4.5 algorithm|C4.5]] and C5.0 tree-generation algorithms. [[Information gain in decision trees|Information gain]] is based on the concept of [[information entropy|entropy]] from [[information theory]]. | |||

<math>I_{E}(f) = - \sum^{m}_{i=1} f_i \log^{}_2 f_i</math> | |||

==Decision tree advantages== | |||

Amongst other data mining methods, decision trees have various advantages: | |||

* '''Simple to understand and interpret.''' People are able to understand decision tree models after a brief explanation. | |||

* '''Requires little data preparation.''' Other techniques often require data normalisation, [[dummy variable (statistics)|dummy variables]] need to be created and blank values to be removed. | |||

* '''Able to handle both numerical and [[Categorical variable|categorical]] data.''' Other techniques are usually specialised in analysing datasets that have only one type of variable. (For example, relation rules can be used only with nominal variables while neural networks can be used only with numerical variables.) | |||

* '''Uses a [[white box (software engineering)|white box]] model.''' If a given situation is observable in a model the explanation for the condition is easily explained by boolean logic. (An example of a black box model is an [[artificial neural network]] since the explanation for the results is difficult to understand.) | |||

* '''Possible to validate a model using statistical tests.''' That makes it possible to account for the reliability of the model. | |||

* '''[[Robust statistics|Robust]].''' Performs well even if its assumptions are somewhat violated by the true model from which the data were generated. | |||

* '''Performs well with large datasets.''' Large amounts of data can be analysed using standard computing resources in reasonable time. | |||

==Limitations== | |||

* The problem of learning an optimal decision tree is known to be [[NP-complete]] under several aspects of optimality and even for simple concepts.<ref>{{Cite journal | doi = 10.1016/0020-0190(76)90095-8 | last1 = Hyafil | first1 = Laurent | last2 = Rivest | first2 = RL | year = 1976 | title = Constructing Optimal Binary Decision Trees is NP-complete | url = | journal = Information Processing Letters | volume = 5 | issue = 1| pages = 15–17 }}</ref><ref>Murthy S. (1998). Automatic construction of decision trees from data: A multidisciplinary survey. ''Data Mining and Knowledge Discovery''</ref> Consequently, practical decision-tree learning algorithms are based on heuristics such as the [[greedy algorithm]] where locally-optimal decisions are made at each node. Such algorithms cannot guarantee to return the globally-optimal decision tree. | |||

* Decision-tree learners can create over-complex trees that do not generalise well from the training data. (This is known as [[overfitting]].<ref>{{cite doi|10.1007/978-1-84628-766-4}}</ref>) Mechanisms such as [[Pruning (decision trees)|pruning]] are necessary to avoid this problem. | |||

* There are concepts that are hard to learn because decision trees do not express them easily, such as [[XOR]], [[parity bit#Parity|parity]] or [[multiplexer]] problems. In such cases, the decision tree becomes prohibitively large. Approaches to solve the problem involve either changing the representation of the problem domain (known as propositionalisation)<ref>{{cite doi|10.1007/b13700}}</ref> or using learning algorithms based on more expressive representations (such as [[statistical relational learning]] or [[inductive logic programming]]). | |||

* For data including categorical variables with different numbers of levels, [[information gain in decision trees]] is biased in favor of those attributes with more levels.<ref>{{cite conference | |||

|author=Deng,H.|coauthors=Runger, G.; Tuv, E. | |||

|title=Bias of importance measures for multi-valued attributes and solutions | |||

|conference=Proceedings of the 21st International Conference on Artificial Neural Networks (ICANN)|year=2011|pages= 293–300 }}</ref> | |||

==Extensions== | |||

===Decision graphs=== | |||

In a decision tree, all paths from the root node to the leaf node proceed by way of conjunction, or ''AND''. | |||

In a decision graph, it is possible to use disjunctions (ORs) to join two more paths together using [[Minimum message length]] (MML).<ref>http://citeseer.ist.psu.edu/oliver93decision.html</ref> Decision graphs have been further extended to allow for previously unstated new attributes to be learnt dynamically and used at different places within the graph.<ref>[http://www.csse.monash.edu.au/~dld/Publications/2003/Tan+Dowe2003_MMLDecisionGraphs.pdf Tan & Dowe (2003)]</ref> The more general coding scheme results in better predictive accuracy and log-loss probabilistic scoring.{{Citation needed|date=January 2012}} In general, decision graphs infer models with fewer leaves than decision trees. | |||

===Alternative search methods=== | |||

Evolutionary algorithms have been used to avoid local optimal decisions and search the decision tree space with little ''a priori'' bias.<ref>Papagelis A., Kalles D.(2001). Breeding Decision Trees Using Evolutionary Techniques, Proceedings of the Eighteenth International Conference on Machine Learning, p.393-400, June 28-July 01, 2001</ref><ref>Barros, Rodrigo C., Basgalupp, M. P., Carvalho, A. C. P. L. F., Freitas, Alex A. (2011). [http://ieeexplore.ieee.org/xpls/abs_all.jsp?arnumber=5928432 A Survey of Evolutionary Algorithms for Decision-Tree Induction]. IEEE Transactions on Systems, Man and Cybernetics, Part C: Applications and Reviews, vol. 42, n. 3, p. 291-312, May 2012.</ref> | |||

It is also possible for a tree to be sampled using MCMC in a bayesian paradigm.<ref>Chipman, Hugh A., Edward I. George, and Robert E. McCulloch. "Bayesian CART model search." Journal of the American Statistical Association 93.443 (1998): 935-948.</ref> | |||

The tree can be searched for in a bottom-up fashion.<ref>Barros R. C., Cerri R., Jaskowiak P. A., Carvalho, A. C. P. L. F., [http://dx.doi.org/10.1109/ISDA.2011.6121697 A bottom-up oblique decision tree induction algorithm]. Proceedings of the 11th International Conference on Intelligent Systems Design and Applications (ISDA 2011).</ref> | |||

==See also== | |||

* [[Decision-tree pruning|Decision tree pruning]] | |||

* [[Binary decision diagram]] | |||

* [[CHAID]] | |||

* [[Predictive_analytics#Classification_and_regression_trees|CART]] | |||

* [[ID3 algorithm]] | |||

* [[C4.5 algorithm]] | |||

* [[Decision stump]] | |||

* [[Incremental decision tree]] | |||

* [[Alternating decision tree]] | |||

* [[Structured data analysis (statistics)]] | |||

==Implementations== | |||

Many data mining software packages provide implementations of one or more decision tree algorithms. Several examples include Salford Systems CART (which licensed the proprietary code of the original CART authors<ref name="bfos"/>), [[SPSS Modeler|IBM SPSS Modeler]], [[RapidMiner]], [[SAS (software)#Components|SAS Enterprise Miner]], [[R (programming_language)|R]] (an open source software environment for statistical computing which includes several CART implementations such as rpart, party and randomForest packages), [[Weka (machine learning)|Weka]] (a free and open-source data mining suite, contains many decision tree algorithms), [[Orange (software)|Orange]] (a free data mining software suite, which includes the tree module [http://www.ailab.si/orange/doc/modules/orngTree.htm orngTree]), [[KNIME]], [[Microsoft SQL Server]] [http://technet.microsoft.com/en-us/library/cc645868.aspx], and [[scikit-learn]] (a free and open-source machine learning library for the [[Python (programming language)|Python]] programming language). | |||

==References== | |||

{{Reflist}} | |||

==External links== | |||

*[http://onlamp.com/lpt/a/6464 Building Decision Trees in Python] From O'Reilly. | |||

*[http://www.oreillynet.com/mac/blog/2007/06/an_addendum_to_building_decisi.html An Addendum to "Building Decision Trees in Python"] From O'Reilly. | |||

*[http://people.revoledu.com/kardi/tutorial/DecisionTree/index.html Decision Trees Tutorial] using Microsoft Excel. | |||

*[http://aitopics.org/topic/decision-tree-learning Decision Trees page at aitopics.org], a page with commented links. | |||

*[http://ai4r.org/index.html Decision tree implementation in Ruby (AI4R)] | |||

*[http://www.cs.uwaterloo.ca/~mgrzes/code/evoldectrees/ Evolutionary Learning of Decision Trees in C++] | |||

*[https://github.com/kobaj/JavaDecisionTree Java implementation of Decision Trees based on Information Gain] | |||

{{DEFAULTSORT:Decision Tree Learning}} | |||

[[Category:Data mining]] | |||

[[Category:Decision trees]] | |||

[[Category:Classification algorithms]] | |||

[[Category:Machine learning]] | |||

Revision as of 09:16, 30 January 2014

Genital herpes is a kind of sexually transmitted disease that certain becomes through sexual or oral connection with someone else that is afflicted by the viral disorder. Oral herpes requires occasional eruptions of fever blisters" round the mouth Figure 02 Also known as cold sores" or fever blisters," characteristic herpes lesions often appear around the mouth sometimes of illness, after sunlight or wind publicity, during menstruation, or with mental stress.

Though statistical numbers aren't nearly where they should be, increasing numbers of people are arriving at various clinics regarding the herpes symptoms also to have themselves and their companions treated.

Because symptoms may be recognised incorrectly as skin irritation or something else, a partner can't be determined by the partner with herpes to constantly find out when he or she is contagious. Some who contract herpes are symptom-no cost, others have just one breakout, and still others have standard bouts of symptoms.

Similarly, careful hand washing should be practiced to avoid the virus from spreading to other parts of the body, especially the eye and mouth. If you think you have already been exposed or show signs of herpes infection, see your medical provider. Prompt qualified diagnosis may boost your chances of responding to a prescription drugs like acyclovir that decreases the duration and severity of a short bout of symptoms.

HSV type 1 is the herpes virus that is usually responsible for cold sores of the mouth, the so-referred to as " fever blisters." You get HSV-1 by coming into contact with the saliva of an contaminated person.

If you are you looking for more information regarding herpes symptoms oral pictures look into our own web page.

29 yr old Orthopaedic Surgeon Grippo from Saint-Paul, spends time with interests including model railways, top property developers in singapore developers in singapore and dolls. Finished a cruise ship experience that included passing by Runic Stones and Church.

Decision tree learning uses a decision tree as a predictive model which maps observations about an item to conclusions about the item's target value. It is one of the predictive modelling approaches used in statistics, data mining and machine learning. More descriptive names for such tree models are classification trees or regression trees. In these tree structures, leaves represent class labels and branches represent conjunctions of features that lead to those class labels.

In decision analysis, a decision tree can be used to visually and explicitly represent decisions and decision making. In data mining, a decision tree describes data but not decisions; rather the resulting classification tree can be an input for decision making. This page deals with decision trees in data mining.

General

Decision tree learning is a method commonly used in data mining.[1] The goal is to create a model that predicts the value of a target variable based on several input variables. An example is shown on the right. Each interior node corresponds to one of the input variables; there are edges to children for each of the possible values of that input variable. Each leaf represents a value of the target variable given the values of the input variables represented by the path from the root to the leaf.

A decision tree is a simple representation for classifying examples. Decision tree learning is one of the most successful techniques for supervised classification learning. For this section, assume that all of the features have finite discrete domains, and there is a single target feature called the classification. Each element of the domain of the classification is called a class. A decision tree or a classification tree is a tree in which each internal (non-leaf) node is labeled with an input feature. The arcs coming from a node labeled with a feature are labeled with each of the possible values of the feature. Each leaf of the tree is labeled with a class or a probability distribution over the classes.

A tree can be "learned" by splitting the source set into subsets based on an attribute value test. This process is repeated on each derived subset in a recursive manner called recursive partitioning. The recursion is completed when the subset at a node has all the same value of the target variable, or when splitting no longer adds value to the predictions. This process of top-down induction of decision trees (TDIDT) [2] is an example of a greedy algorithm, and it is by far the most common strategy for learning decision trees from data.

In data mining, decision trees can be described also as the combination of mathematical and computational techniques to aid the description, categorisation and generalisation of a given set of data.

Data comes in records of the form:

The dependent variable, Y, is the target variable that we are trying to understand, classify or generalize. The vector x is composed of the input variables, x1, x2, x3 etc., that are used for that task.

Types

Decision trees used in data mining are of two main types:

- Classification tree analysis is when the predicted outcome is the class to which the data belongs.

- Regression tree analysis is when the predicted outcome can be considered a real number (e.g. the price of a house, or a patient’s length of stay in a hospital).

The term Classification And Regression Tree (CART) analysis is an umbrella term used to refer to both of the above procedures, first introduced by Breiman et al.[3] Trees used for regression and trees used for classification have some similarities - but also some differences, such as the procedure used to determine where to split.[3]

Some techniques, often called ensemble methods, construct more than one decision tree:

- Bagging decision trees, an early ensemble method, builds multiple decision trees by repeatedly resampling training data with replacement, and voting the trees for a consensus prediction.[4]

- A Random Forest classifier uses a number of decision trees, in order to improve the classification rate.

- Boosted Trees can be used for regression-type and classification-type problems.[5][6]

- Rotation forest - in which every decision tree is trained by first applying principal component analysis (PCA) on a random subset of the input features.[7]

Decision tree learning is the construction of a decision tree from class-labeled training tuples. A decision tree is a flow-chart-like structure, where each internal (non-leaf) node denotes a test on an attribute, each branch represents the outcome of a test, and each leaf (or terminal) node holds a class label. The topmost node in a tree is the root node.

There are many specific decision-tree algorithms. Notable ones include:

- ID3 (Iterative Dichotomiser 3)

- C4.5 (successor of ID3)

- CART (Classification And Regression Tree)

- CHAID (CHi-squared Automatic Interaction Detector). Performs multi-level splits when computing classification trees.[8]

- MARS: extends decision trees to better handle numerical data.

ID3 and CART were invented independently at around same time (b/w 1970-80), yet follow a similar approach for learning decision tree from training tuples.

Formulae

Algorithms for constructing decision trees usually work top-down, by choosing a variable at each step that best splits the set of items.[9] Different algorithms use different metrics for measuring "best". These generally measure the homogeneity of the target variable within the subsets. Some examples are given below. These metrics are applied to each candidate subset, and the resulting values are combined (e.g., averaged) to provide a measure of the quality of the split.

Gini impurity

Mining Engineer (Excluding Oil ) Truman from Alma, loves to spend time knotting, largest property developers in singapore developers in singapore and stamp collecting. Recently had a family visit to Urnes Stave Church. Used by the CART (classification and regression tree) algorithm, Gini impurity is a measure of how often a randomly chosen element from the set would be incorrectly labeled if it were randomly labeled according to the distribution of labels in the subset. Gini impurity can be computed by summing the probability of each item being chosen times the probability of a mistake in categorizing that item. It reaches its minimum (zero) when all cases in the node fall into a single target category.

To compute Gini impurity for a set of items, suppose i takes on values in {1, 2, ..., m}, and let fi be the fraction of items labeled with value i in the set.

Information gain

Mining Engineer (Excluding Oil ) Truman from Alma, loves to spend time knotting, largest property developers in singapore developers in singapore and stamp collecting. Recently had a family visit to Urnes Stave Church. Used by the ID3, C4.5 and C5.0 tree-generation algorithms. Information gain is based on the concept of entropy from information theory.

Decision tree advantages

Amongst other data mining methods, decision trees have various advantages:

- Simple to understand and interpret. People are able to understand decision tree models after a brief explanation.

- Requires little data preparation. Other techniques often require data normalisation, dummy variables need to be created and blank values to be removed.

- Able to handle both numerical and categorical data. Other techniques are usually specialised in analysing datasets that have only one type of variable. (For example, relation rules can be used only with nominal variables while neural networks can be used only with numerical variables.)

- Uses a white box model. If a given situation is observable in a model the explanation for the condition is easily explained by boolean logic. (An example of a black box model is an artificial neural network since the explanation for the results is difficult to understand.)

- Possible to validate a model using statistical tests. That makes it possible to account for the reliability of the model.

- Robust. Performs well even if its assumptions are somewhat violated by the true model from which the data were generated.

- Performs well with large datasets. Large amounts of data can be analysed using standard computing resources in reasonable time.

Limitations

- The problem of learning an optimal decision tree is known to be NP-complete under several aspects of optimality and even for simple concepts.[10][11] Consequently, practical decision-tree learning algorithms are based on heuristics such as the greedy algorithm where locally-optimal decisions are made at each node. Such algorithms cannot guarantee to return the globally-optimal decision tree.

- Decision-tree learners can create over-complex trees that do not generalise well from the training data. (This is known as overfitting.[12]) Mechanisms such as pruning are necessary to avoid this problem.

- There are concepts that are hard to learn because decision trees do not express them easily, such as XOR, parity or multiplexer problems. In such cases, the decision tree becomes prohibitively large. Approaches to solve the problem involve either changing the representation of the problem domain (known as propositionalisation)[13] or using learning algorithms based on more expressive representations (such as statistical relational learning or inductive logic programming).

- For data including categorical variables with different numbers of levels, information gain in decision trees is biased in favor of those attributes with more levels.[14]

Extensions

Decision graphs

In a decision tree, all paths from the root node to the leaf node proceed by way of conjunction, or AND. In a decision graph, it is possible to use disjunctions (ORs) to join two more paths together using Minimum message length (MML).[15] Decision graphs have been further extended to allow for previously unstated new attributes to be learnt dynamically and used at different places within the graph.[16] The more general coding scheme results in better predictive accuracy and log-loss probabilistic scoring.Potter or Ceramic Artist Truman Bedell from Rexton, has interests which include ceramics, best property developers in singapore developers in singapore and scrabble. Was especially enthused after visiting Alejandro de Humboldt National Park. In general, decision graphs infer models with fewer leaves than decision trees.

Alternative search methods

Evolutionary algorithms have been used to avoid local optimal decisions and search the decision tree space with little a priori bias.[17][18]

It is also possible for a tree to be sampled using MCMC in a bayesian paradigm.[19]

The tree can be searched for in a bottom-up fashion.[20]

See also

- Decision tree pruning

- Binary decision diagram

- CHAID

- CART

- ID3 algorithm

- C4.5 algorithm

- Decision stump

- Incremental decision tree

- Alternating decision tree

- Structured data analysis (statistics)

Implementations

Many data mining software packages provide implementations of one or more decision tree algorithms. Several examples include Salford Systems CART (which licensed the proprietary code of the original CART authors[3]), IBM SPSS Modeler, RapidMiner, SAS Enterprise Miner, R (an open source software environment for statistical computing which includes several CART implementations such as rpart, party and randomForest packages), Weka (a free and open-source data mining suite, contains many decision tree algorithms), Orange (a free data mining software suite, which includes the tree module orngTree), KNIME, Microsoft SQL Server [1], and scikit-learn (a free and open-source machine learning library for the Python programming language).

References

43 year old Petroleum Engineer Harry from Deep River, usually spends time with hobbies and interests like renting movies, property developers in singapore new condominium and vehicle racing. Constantly enjoys going to destinations like Camino Real de Tierra Adentro.

External links

- Building Decision Trees in Python From O'Reilly.

- An Addendum to "Building Decision Trees in Python" From O'Reilly.

- Decision Trees Tutorial using Microsoft Excel.

- Decision Trees page at aitopics.org, a page with commented links.

- Decision tree implementation in Ruby (AI4R)

- Evolutionary Learning of Decision Trees in C++

- Java implementation of Decision Trees based on Information Gain

- ↑ 20 year-old Real Estate Agent Rusty from Saint-Paul, has hobbies and interests which includes monopoly, property developers in singapore and poker. Will soon undertake a contiki trip that may include going to the Lower Valley of the Omo.

My blog: http://www.primaboinca.com/view_profile.php?userid=5889534 - ↑ Quinlan, J. R., (1986). Induction of Decision Trees. Machine Learning 1: 81-106, Kluwer Academic Publishers

- ↑ 3.0 3.1 3.2 20 year-old Real Estate Agent Rusty from Saint-Paul, has hobbies and interests which includes monopoly, property developers in singapore and poker. Will soon undertake a contiki trip that may include going to the Lower Valley of the Omo.

My blog: http://www.primaboinca.com/view_profile.php?userid=5889534 - ↑ Breiman, L. (1996). Bagging Predictors. "Machine Learning, 24": pp. 123-140.

- ↑ Friedman, J. H. (1999). Stochastic gradient boosting. Stanford University.

- ↑ Hastie, T., Tibshirani, R., Friedman, J. H. (2001). The elements of statistical learning : Data mining, inference, and prediction. New York: Springer Verlag.

- ↑ Rodriguez, J.J. and Kuncheva, L.I. and Alonso, C.J. (2006), Rotation forest: A new classifier ensemble method, IEEE Transactions on Pattern Analysis and Machine Intelligence, 28(10):1619-1630.

- ↑ One of the biggest reasons investing in a Singapore new launch is an effective things is as a result of it is doable to be lent massive quantities of money at very low interest rates that you should utilize to purchase it. Then, if property values continue to go up, then you'll get a really high return on funding (ROI). Simply make sure you purchase one of the higher properties, reminiscent of the ones at Fernvale the Riverbank or any Singapore landed property Get Earnings by means of Renting

In its statement, the singapore property listing - website link, government claimed that the majority citizens buying their first residence won't be hurt by the new measures. Some concessions can even be prolonged to chose teams of consumers, similar to married couples with a minimum of one Singaporean partner who are purchasing their second property so long as they intend to promote their first residential property. Lower the LTV limit on housing loans granted by monetary establishments regulated by MAS from 70% to 60% for property purchasers who are individuals with a number of outstanding housing loans on the time of the brand new housing purchase. Singapore Property Measures - 30 August 2010 The most popular seek for the number of bedrooms in Singapore is 4, followed by 2 and three. Lush Acres EC @ Sengkang

Discover out more about real estate funding in the area, together with info on international funding incentives and property possession. Many Singaporeans have been investing in property across the causeway in recent years, attracted by comparatively low prices. However, those who need to exit their investments quickly are likely to face significant challenges when trying to sell their property – and could finally be stuck with a property they can't sell. Career improvement programmes, in-house valuation, auctions and administrative help, venture advertising and marketing, skilled talks and traisning are continuously planned for the sales associates to help them obtain better outcomes for his or her shoppers while at Knight Frank Singapore. No change Present Rules

Extending the tax exemption would help. The exemption, which may be as a lot as $2 million per family, covers individuals who negotiate a principal reduction on their existing mortgage, sell their house short (i.e., for lower than the excellent loans), or take part in a foreclosure course of. An extension of theexemption would seem like a common-sense means to assist stabilize the housing market, but the political turmoil around the fiscal-cliff negotiations means widespread sense could not win out. Home Minority Chief Nancy Pelosi (D-Calif.) believes that the mortgage relief provision will be on the table during the grand-cut price talks, in response to communications director Nadeam Elshami. Buying or promoting of blue mild bulbs is unlawful.

A vendor's stamp duty has been launched on industrial property for the primary time, at rates ranging from 5 per cent to 15 per cent. The Authorities might be trying to reassure the market that they aren't in opposition to foreigners and PRs investing in Singapore's property market. They imposed these measures because of extenuating components available in the market." The sale of new dual-key EC models will even be restricted to multi-generational households only. The models have two separate entrances, permitting grandparents, for example, to dwell separately. The vendor's stamp obligation takes effect right this moment and applies to industrial property and plots which might be offered inside three years of the date of buy. JLL named Best Performing Property Brand for second year running

The data offered is for normal info purposes only and isn't supposed to be personalised investment or monetary advice. Motley Fool Singapore contributor Stanley Lim would not personal shares in any corporations talked about. Singapore private home costs increased by 1.eight% within the fourth quarter of 2012, up from 0.6% within the earlier quarter. Resale prices of government-built HDB residences which are usually bought by Singaporeans, elevated by 2.5%, quarter on quarter, the quickest acquire in five quarters. And industrial property, prices are actually double the levels of three years ago. No withholding tax in the event you sell your property. All your local information regarding vital HDB policies, condominium launches, land growth, commercial property and more

There are various methods to go about discovering the precise property. Some local newspapers (together with the Straits Instances ) have categorised property sections and many local property brokers have websites. Now there are some specifics to consider when buying a 'new launch' rental. Intended use of the unit Every sale begins with 10 p.c low cost for finish of season sale; changes to 20 % discount storewide; follows by additional reduction of fiftyand ends with last discount of 70 % or extra. Typically there is even a warehouse sale or transferring out sale with huge mark-down of costs for stock clearance. Deborah Regulation from Expat Realtor shares her property market update, plus prime rental residences and houses at the moment available to lease Esparina EC @ Sengkang - ↑ One of the biggest reasons investing in a Singapore new launch is an effective things is as a result of it is doable to be lent massive quantities of money at very low interest rates that you should utilize to purchase it. Then, if property values continue to go up, then you'll get a really high return on funding (ROI). Simply make sure you purchase one of the higher properties, reminiscent of the ones at Fernvale the Riverbank or any Singapore landed property Get Earnings by means of Renting

In its statement, the singapore property listing - website link, government claimed that the majority citizens buying their first residence won't be hurt by the new measures. Some concessions can even be prolonged to chose teams of consumers, similar to married couples with a minimum of one Singaporean partner who are purchasing their second property so long as they intend to promote their first residential property. Lower the LTV limit on housing loans granted by monetary establishments regulated by MAS from 70% to 60% for property purchasers who are individuals with a number of outstanding housing loans on the time of the brand new housing purchase. Singapore Property Measures - 30 August 2010 The most popular seek for the number of bedrooms in Singapore is 4, followed by 2 and three. Lush Acres EC @ Sengkang

Discover out more about real estate funding in the area, together with info on international funding incentives and property possession. Many Singaporeans have been investing in property across the causeway in recent years, attracted by comparatively low prices. However, those who need to exit their investments quickly are likely to face significant challenges when trying to sell their property – and could finally be stuck with a property they can't sell. Career improvement programmes, in-house valuation, auctions and administrative help, venture advertising and marketing, skilled talks and traisning are continuously planned for the sales associates to help them obtain better outcomes for his or her shoppers while at Knight Frank Singapore. No change Present Rules

Extending the tax exemption would help. The exemption, which may be as a lot as $2 million per family, covers individuals who negotiate a principal reduction on their existing mortgage, sell their house short (i.e., for lower than the excellent loans), or take part in a foreclosure course of. An extension of theexemption would seem like a common-sense means to assist stabilize the housing market, but the political turmoil around the fiscal-cliff negotiations means widespread sense could not win out. Home Minority Chief Nancy Pelosi (D-Calif.) believes that the mortgage relief provision will be on the table during the grand-cut price talks, in response to communications director Nadeam Elshami. Buying or promoting of blue mild bulbs is unlawful.

A vendor's stamp duty has been launched on industrial property for the primary time, at rates ranging from 5 per cent to 15 per cent. The Authorities might be trying to reassure the market that they aren't in opposition to foreigners and PRs investing in Singapore's property market. They imposed these measures because of extenuating components available in the market." The sale of new dual-key EC models will even be restricted to multi-generational households only. The models have two separate entrances, permitting grandparents, for example, to dwell separately. The vendor's stamp obligation takes effect right this moment and applies to industrial property and plots which might be offered inside three years of the date of buy. JLL named Best Performing Property Brand for second year running

The data offered is for normal info purposes only and isn't supposed to be personalised investment or monetary advice. Motley Fool Singapore contributor Stanley Lim would not personal shares in any corporations talked about. Singapore private home costs increased by 1.eight% within the fourth quarter of 2012, up from 0.6% within the earlier quarter. Resale prices of government-built HDB residences which are usually bought by Singaporeans, elevated by 2.5%, quarter on quarter, the quickest acquire in five quarters. And industrial property, prices are actually double the levels of three years ago. No withholding tax in the event you sell your property. All your local information regarding vital HDB policies, condominium launches, land growth, commercial property and more

There are various methods to go about discovering the precise property. Some local newspapers (together with the Straits Instances ) have categorised property sections and many local property brokers have websites. Now there are some specifics to consider when buying a 'new launch' rental. Intended use of the unit Every sale begins with 10 p.c low cost for finish of season sale; changes to 20 % discount storewide; follows by additional reduction of fiftyand ends with last discount of 70 % or extra. Typically there is even a warehouse sale or transferring out sale with huge mark-down of costs for stock clearance. Deborah Regulation from Expat Realtor shares her property market update, plus prime rental residences and houses at the moment available to lease Esparina EC @ Sengkang - ↑ One of the biggest reasons investing in a Singapore new launch is an effective things is as a result of it is doable to be lent massive quantities of money at very low interest rates that you should utilize to purchase it. Then, if property values continue to go up, then you'll get a really high return on funding (ROI). Simply make sure you purchase one of the higher properties, reminiscent of the ones at Fernvale the Riverbank or any Singapore landed property Get Earnings by means of Renting

In its statement, the singapore property listing - website link, government claimed that the majority citizens buying their first residence won't be hurt by the new measures. Some concessions can even be prolonged to chose teams of consumers, similar to married couples with a minimum of one Singaporean partner who are purchasing their second property so long as they intend to promote their first residential property. Lower the LTV limit on housing loans granted by monetary establishments regulated by MAS from 70% to 60% for property purchasers who are individuals with a number of outstanding housing loans on the time of the brand new housing purchase. Singapore Property Measures - 30 August 2010 The most popular seek for the number of bedrooms in Singapore is 4, followed by 2 and three. Lush Acres EC @ Sengkang

Discover out more about real estate funding in the area, together with info on international funding incentives and property possession. Many Singaporeans have been investing in property across the causeway in recent years, attracted by comparatively low prices. However, those who need to exit their investments quickly are likely to face significant challenges when trying to sell their property – and could finally be stuck with a property they can't sell. Career improvement programmes, in-house valuation, auctions and administrative help, venture advertising and marketing, skilled talks and traisning are continuously planned for the sales associates to help them obtain better outcomes for his or her shoppers while at Knight Frank Singapore. No change Present Rules

Extending the tax exemption would help. The exemption, which may be as a lot as $2 million per family, covers individuals who negotiate a principal reduction on their existing mortgage, sell their house short (i.e., for lower than the excellent loans), or take part in a foreclosure course of. An extension of theexemption would seem like a common-sense means to assist stabilize the housing market, but the political turmoil around the fiscal-cliff negotiations means widespread sense could not win out. Home Minority Chief Nancy Pelosi (D-Calif.) believes that the mortgage relief provision will be on the table during the grand-cut price talks, in response to communications director Nadeam Elshami. Buying or promoting of blue mild bulbs is unlawful.

A vendor's stamp duty has been launched on industrial property for the primary time, at rates ranging from 5 per cent to 15 per cent. The Authorities might be trying to reassure the market that they aren't in opposition to foreigners and PRs investing in Singapore's property market. They imposed these measures because of extenuating components available in the market." The sale of new dual-key EC models will even be restricted to multi-generational households only. The models have two separate entrances, permitting grandparents, for example, to dwell separately. The vendor's stamp obligation takes effect right this moment and applies to industrial property and plots which might be offered inside three years of the date of buy. JLL named Best Performing Property Brand for second year running

The data offered is for normal info purposes only and isn't supposed to be personalised investment or monetary advice. Motley Fool Singapore contributor Stanley Lim would not personal shares in any corporations talked about. Singapore private home costs increased by 1.eight% within the fourth quarter of 2012, up from 0.6% within the earlier quarter. Resale prices of government-built HDB residences which are usually bought by Singaporeans, elevated by 2.5%, quarter on quarter, the quickest acquire in five quarters. And industrial property, prices are actually double the levels of three years ago. No withholding tax in the event you sell your property. All your local information regarding vital HDB policies, condominium launches, land growth, commercial property and more

There are various methods to go about discovering the precise property. Some local newspapers (together with the Straits Instances ) have categorised property sections and many local property brokers have websites. Now there are some specifics to consider when buying a 'new launch' rental. Intended use of the unit Every sale begins with 10 p.c low cost for finish of season sale; changes to 20 % discount storewide; follows by additional reduction of fiftyand ends with last discount of 70 % or extra. Typically there is even a warehouse sale or transferring out sale with huge mark-down of costs for stock clearance. Deborah Regulation from Expat Realtor shares her property market update, plus prime rental residences and houses at the moment available to lease Esparina EC @ Sengkang - ↑ Murthy S. (1998). Automatic construction of decision trees from data: A multidisciplinary survey. Data Mining and Knowledge Discovery

- ↑ Template:Cite doi

- ↑ Template:Cite doi

- ↑ 55 years old Systems Administrator Antony from Clarence Creek, really loves learning, PC Software and aerobics. Likes to travel and was inspired after making a journey to Historic Ensemble of the Potala Palace.

You can view that web-site... ccleaner free download - ↑ http://citeseer.ist.psu.edu/oliver93decision.html

- ↑ Tan & Dowe (2003)

- ↑ Papagelis A., Kalles D.(2001). Breeding Decision Trees Using Evolutionary Techniques, Proceedings of the Eighteenth International Conference on Machine Learning, p.393-400, June 28-July 01, 2001

- ↑ Barros, Rodrigo C., Basgalupp, M. P., Carvalho, A. C. P. L. F., Freitas, Alex A. (2011). A Survey of Evolutionary Algorithms for Decision-Tree Induction. IEEE Transactions on Systems, Man and Cybernetics, Part C: Applications and Reviews, vol. 42, n. 3, p. 291-312, May 2012.

- ↑ Chipman, Hugh A., Edward I. George, and Robert E. McCulloch. "Bayesian CART model search." Journal of the American Statistical Association 93.443 (1998): 935-948.

- ↑ Barros R. C., Cerri R., Jaskowiak P. A., Carvalho, A. C. P. L. F., A bottom-up oblique decision tree induction algorithm. Proceedings of the 11th International Conference on Intelligent Systems Design and Applications (ISDA 2011).