Main Page: Difference between revisions

No edit summary |

No edit summary |

||

| Line 1: | Line 1: | ||

{{Cleanup|reason=this article need neutral and better phrasing|date=November 2012}} | |||

{{Modulation techniques}} | |||

'''Amplitude-shift keying''' ('''ASK''') is a form of [[amplitude modulation]] that represents [[Digital data|digital]] [[data]] as variations in the [[amplitude]] of a [[carrier wave]]. | |||

In an ASK system, the binary symbol 1 is represented by transmitting a fixed-amplitude carrier wave and fixed frequency for a bit duration of T seconds. If the signal value is 1 then the carrier signal will be transmitted; otherwise, a signal value of 0 will be transmitted. | |||

: | Any digital modulation scheme uses a [[wiktionary:finite|finite]] number of distinct signals to represent digital data. ASK uses a finite number of amplitudes, each assigned a unique pattern of [[bit|binary digit]]s. Usually, each amplitude encodes an equal number of bits. Each pattern of bits forms the [[Symbol (data)|symbol]] that is represented by the particular amplitude. The [[demodulator]], which is designed specifically for the symbol-set used by the modulator, determines the amplitude of the received signal and maps it back to the symbol it represents, thus recovering the original data. [[Frequency]] and [[Phase (waves)|phase]] of the carrier are kept constant. | ||

Like [[Amplitude modulation|AM]], ASK is also linear and sensitive to atmospheric noise, distortions, propagation conditions on different routes in [[PSTN]], etc. Both ASK modulation and demodulation processes are relatively inexpensive. The ASK technique is also commonly used to transmit [[digital data]] over optical fiber. For LED transmitters, binary 1 is represented by a short pulse of light and binary 0 by the absence of light. Laser transmitters normally have a fixed "bias" current that causes the device to emit a low light level. This low level represents binary 0, while a higher-amplitude lightwave represents binary 1. | |||

The simplest and most common form of ASK operates as a switch, using the presence of a carrier wave to indicate a binary one and its absence to indicate a binary zero. This type of modulation is called [[on-off keying]] (OOK), and is used at radio frequencies to transmit Morse code (referred to as continuous wave operation), | |||

and | More sophisticated encoding schemes have been developed which represent data in groups using additional amplitude levels. For instance, a four-level encoding scheme can represent two bits with each shift in amplitude; an eight-level scheme can represent three bits; and so on. These forms of amplitude-shift keying require a high signal-to-noise ratio for their recovery, as by their nature much of the signal is transmitted at reduced power. | ||

: | [[File:Ask ideal diagram.png|center|600px|thumbnail|ASK diagram]] | ||

ASK system can be divided into three blocks. The first one represents the transmitter, the second one is a linear model of the effects of the channel, the third one shows the structure of the receiver. The following notation is used: | |||

*''ht''<sub>(f)</sub> is the carrier signal for the transmission | |||

*''hc''<sub>(f)</sub> is the impulse response of the channel | |||

*''n''<sub>(t)</sub> is the noise introduced by the channel | |||

*''hr''<sub>(f)</sub> is the filter at the receiver | |||

*''L'' is the number of levels that are used for transmission | |||

*''T''<sub>s</sub> is the time between the generation of two symbols | |||

Different symbols are represented with different voltages. If the maximum allowed value for the voltage is A, then all the possible values are in the range [−A, A] and they are given by: | |||

<math>v_i = \frac{2 A}{L-1} i - A; \quad i = 0,1,\dots, L-1</math> | |||

the difference between one voltage and the other is: | |||

<math>\Delta = \frac{2 A}{L - 1} </math> | |||

Considering the picture, the symbols v[n] are generated randomly by the source S, then the impulse generator creates impulses with an area of v[n]. These impulses are sent to the filter ht to be sent through the channel. In other words, for each symbol a different carrier wave is sent with the relative amplitude. | |||

Out of the transmitter, the signal s(t) can be expressed in the form: | |||

<math>s (t) = \sum_{n = -\infty}^\infty v[n] \cdot h_t (t - n T_s)</math> | |||

In the receiver, after the filtering through hr (t) the signal is: | |||

<math>z(t) = n_r (t) + \sum_{n = -\infty}^{\infty} v[n] \cdot g (t - n T_s)</math> | |||

where we use the notation: | |||

<math>n_r (t) = n(t) * h_r (f)</math> | |||

<math>g(t) = h_t (t) * h_c (f) * h_r (t)</math> | |||

where * indicates the convolution between two signals. After the A/D conversion the signal z[k] can be expressed in the form: | |||

<math>z[k] = n_r [k] + v[k] g[0] + \sum_{n \neq k} v[n] g[k-n]</math> | |||

: | In this relationship, the second term represents the symbol to be extracted. The others are unwanted: the first one is the effect of noise, the third one is due to the intersymbol interference. | ||

If the filters are chosen so that g(t) will satisfy the Nyquist ISI criterion, then there will be no intersymbol interference and the value of the sum will be zero, so: | |||

<math>z[k] = n_r [k] + v[k] g[0]</math> | |||

the transmission will be affected only by noise. | |||

== Probability of error == | |||

: | The probability density function of having an error of a given size can be modelled by a Gaussian function; the mean value will be the relative sent value, and its variance will be given by: | ||

<math>\sigma_N^2 = \int_{-\infty}^{+\infty} \Phi_N (f) \cdot |H_r (f)|^2 df</math> | |||

where <math>\Phi_N (f)</math> is the spectral density of the noise within the band and Hr (f) is the continuous Fourier transform of the impulse response of the filter hr (f). | |||

The probability of making an error is given by: | |||

<math>P_e = P_{e|H_0} \cdot P_{H_0} + P_{e|H_1} \cdot P_{H_1} + \cdots + P_{e|H_{L-1}} \cdot P_{H_{L-1}}</math> | |||

where, for example, <math>P_{e|H_0}</math> is the conditional probability of making an error given that a symbol v0 has been sent and <math>P_{H_0}</math> is the probability of sending a symbol v0. | |||

If the probability of sending any symbol is the same, then: | |||

<math>P_{H_i} = \frac{1}{L}</math> | |||

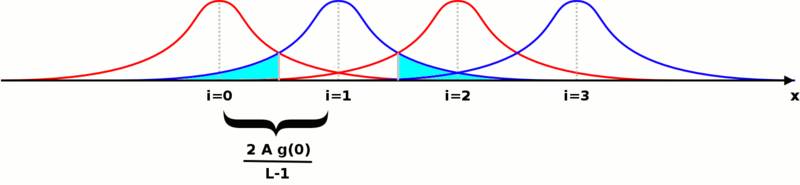

If we represent all the probability density functions on the same plot against the possible value of the voltage to be transmitted, we get a picture like this (the particular case of L = 4 is shown): | |||

[[File:Ask dia calc prob.png|center|800px]] | |||

The probability of making an error after a single symbol has been sent is the area of the Gaussian function falling under the functions for the other symbols. It is shown in cyan for just one of them. If we call P+ the area under one side of the Gaussian, the sum of all the areas will be: 2 L P^+ - 2 P^+. The total probability of making an error can be expressed in the form: | |||

<math>P_e = 2 \left( 1 - \frac{1}{L} \right) P^+</math> | |||

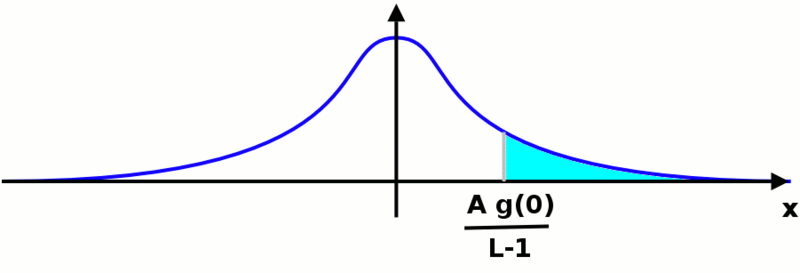

We have now to calculate the value of P+. In order to do that, we can move the origin of the reference wherever we want: the area below the function will not change. We are in a situation like the one shown in the following picture: | |||

[[File:Ask dia calc prob 2.png|center|800px]] | |||

it does not matter which Gaussian function we are considering, the area we want to calculate will be the same. The value we are looking for will be given by the following integral: | |||

<math>P^+ = \int_{\frac{A g(0)}{L-1}}^{\infty} \frac{1}{\sqrt{2 \pi} \sigma_N} e^{-\frac{x^2}{2 \sigma_N^2}} d x = \frac{1}{2} \operatorname{erfc} \left( \frac{A g(0)}{\sqrt{2} (L-1) \sigma_N} \right) </math> | |||

where erfc() is the complementary error function. Putting all these results together, the probability to make an error is: | |||

<math>P_e = \left( 1 - \frac{1}{L} \right) \operatorname{erfc} \left( \frac{A g(0)}{\sqrt{2} (L-1) \sigma_N} \right) </math> | |||

from this formula we can easily understand that the probability to make an error decreases if the maximum amplitude of the transmitted signal or the amplification of the system becomes greater; on the other hand, it increases if the number of levels or the power of noise becomes greater. | |||

This relationship is valid when there is no intersymbol interference, i.e. g(t) is a Nyquist function. | |||

==See also== | ==See also== | ||

* [[ | * [[Frequency-shift keying]] (FSK) | ||

==External links== | |||

*[http://www.maxim-ic.com/appnotes.cfm/an_pk/2815/CMP/WP-21 Calculating the Sensitivity of an Amplitude Shift Keying (ASK) Receiver] | |||

[[Category: | {{DEFAULTSORT:Amplitude-Shift Keying}} | ||

[[Category: | [[Category:Quantized radio modulation modes]] | ||

[[Category:Applied probability]] | |||

[[Category:Fault tolerance]] | |||

Revision as of 01:45, 13 August 2014

Template:Cleanup Template:Modulation techniques Amplitude-shift keying (ASK) is a form of amplitude modulation that represents digital data as variations in the amplitude of a carrier wave. In an ASK system, the binary symbol 1 is represented by transmitting a fixed-amplitude carrier wave and fixed frequency for a bit duration of T seconds. If the signal value is 1 then the carrier signal will be transmitted; otherwise, a signal value of 0 will be transmitted.

Any digital modulation scheme uses a finite number of distinct signals to represent digital data. ASK uses a finite number of amplitudes, each assigned a unique pattern of binary digits. Usually, each amplitude encodes an equal number of bits. Each pattern of bits forms the symbol that is represented by the particular amplitude. The demodulator, which is designed specifically for the symbol-set used by the modulator, determines the amplitude of the received signal and maps it back to the symbol it represents, thus recovering the original data. Frequency and phase of the carrier are kept constant.

Like AM, ASK is also linear and sensitive to atmospheric noise, distortions, propagation conditions on different routes in PSTN, etc. Both ASK modulation and demodulation processes are relatively inexpensive. The ASK technique is also commonly used to transmit digital data over optical fiber. For LED transmitters, binary 1 is represented by a short pulse of light and binary 0 by the absence of light. Laser transmitters normally have a fixed "bias" current that causes the device to emit a low light level. This low level represents binary 0, while a higher-amplitude lightwave represents binary 1.

The simplest and most common form of ASK operates as a switch, using the presence of a carrier wave to indicate a binary one and its absence to indicate a binary zero. This type of modulation is called on-off keying (OOK), and is used at radio frequencies to transmit Morse code (referred to as continuous wave operation),

More sophisticated encoding schemes have been developed which represent data in groups using additional amplitude levels. For instance, a four-level encoding scheme can represent two bits with each shift in amplitude; an eight-level scheme can represent three bits; and so on. These forms of amplitude-shift keying require a high signal-to-noise ratio for their recovery, as by their nature much of the signal is transmitted at reduced power.

ASK system can be divided into three blocks. The first one represents the transmitter, the second one is a linear model of the effects of the channel, the third one shows the structure of the receiver. The following notation is used:

- ht(f) is the carrier signal for the transmission

- hc(f) is the impulse response of the channel

- n(t) is the noise introduced by the channel

- hr(f) is the filter at the receiver

- L is the number of levels that are used for transmission

- Ts is the time between the generation of two symbols

Different symbols are represented with different voltages. If the maximum allowed value for the voltage is A, then all the possible values are in the range [−A, A] and they are given by:

the difference between one voltage and the other is:

Considering the picture, the symbols v[n] are generated randomly by the source S, then the impulse generator creates impulses with an area of v[n]. These impulses are sent to the filter ht to be sent through the channel. In other words, for each symbol a different carrier wave is sent with the relative amplitude.

Out of the transmitter, the signal s(t) can be expressed in the form:

In the receiver, after the filtering through hr (t) the signal is:

where we use the notation:

where * indicates the convolution between two signals. After the A/D conversion the signal z[k] can be expressed in the form:

In this relationship, the second term represents the symbol to be extracted. The others are unwanted: the first one is the effect of noise, the third one is due to the intersymbol interference.

If the filters are chosen so that g(t) will satisfy the Nyquist ISI criterion, then there will be no intersymbol interference and the value of the sum will be zero, so:

the transmission will be affected only by noise.

Probability of error

The probability density function of having an error of a given size can be modelled by a Gaussian function; the mean value will be the relative sent value, and its variance will be given by:

where is the spectral density of the noise within the band and Hr (f) is the continuous Fourier transform of the impulse response of the filter hr (f).

The probability of making an error is given by:

where, for example, is the conditional probability of making an error given that a symbol v0 has been sent and is the probability of sending a symbol v0.

If the probability of sending any symbol is the same, then:

If we represent all the probability density functions on the same plot against the possible value of the voltage to be transmitted, we get a picture like this (the particular case of L = 4 is shown):

The probability of making an error after a single symbol has been sent is the area of the Gaussian function falling under the functions for the other symbols. It is shown in cyan for just one of them. If we call P+ the area under one side of the Gaussian, the sum of all the areas will be: 2 L P^+ - 2 P^+. The total probability of making an error can be expressed in the form:

We have now to calculate the value of P+. In order to do that, we can move the origin of the reference wherever we want: the area below the function will not change. We are in a situation like the one shown in the following picture:

it does not matter which Gaussian function we are considering, the area we want to calculate will be the same. The value we are looking for will be given by the following integral:

where erfc() is the complementary error function. Putting all these results together, the probability to make an error is:

from this formula we can easily understand that the probability to make an error decreases if the maximum amplitude of the transmitted signal or the amplification of the system becomes greater; on the other hand, it increases if the number of levels or the power of noise becomes greater.

This relationship is valid when there is no intersymbol interference, i.e. g(t) is a Nyquist function.

See also

- Frequency-shift keying (FSK)

![{\displaystyle s(t)=\sum _{n=-\infty }^{\infty }v[n]\cdot h_{t}(t-nT_{s})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/90a0b550c811dd0d2b451068cf1c4ba4fb34895b)

![z(t)=n_{r}(t)+\sum _{{n=-\infty }}^{{\infty }}v[n]\cdot g(t-nT_{s})](https://wikimedia.org/api/rest_v1/media/math/render/svg/a96e3e86c428e56323da21a9825580fb170f5a77)

![{\displaystyle z[k]=n_{r}[k]+v[k]g[0]+\sum _{n\neq k}v[n]g[k-n]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/357e87edded3e22b8152039a30afb145410130f2)

![{\displaystyle z[k]=n_{r}[k]+v[k]g[0]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5a159a61e7a4ec3d3f1d1041b9e94496cc15f221)